Web server performance... sick of the tall tales???

Are you? I certainly am.

Here's some of my experiences in getting the best out of linux servers in a high performance web environment. My current experiences are in providing Magento and WordPress servers, and are based on more decades of technical experience than I care to remember. I am a firm believer in the KISS ( Keep It Simple, Stupid ) principle, bear this in mind with some of my suggestions: they're usually taken so overall performance can be understood more simply, and not to provoke a Holy War!

In this post, I'll provide an overview of how to get the best out of your server, be it real or virtual. Unfortunately, a lot of this will not be relevant to those on shared servers, but some will.

The intended audience for this post is anyone who has some responsibility for looking after a PHP based website - WordPress, Drupal, Magento, Invision, Joomla!... the list is pretty long. Those who are handling huge volumes of traffic, and have groups of people managing their sites on a fulltime basis will probably not benefit that much, but you never know. I've used this strategy to deliver performant ( and secure! ) ecommerce platforms that were taking in $300,000/day during the busy times, and Magento is not a lightweight platform.

For those of a TL;DR persuasion...

- Size your server correctly

- Cache everything

- Use a CDN

- Site your server near your intended audience

- Monitor it constantly, reconfigure, rinse and repeat

And that's it. If your site is still slow, then you can add 'use better quality developers' to the list. However, this is fodder for a separate post... analyzing and fixing code is always time consuming, and therefore expensive.

In more detail... lots more detail!

Size your server correctly

I almost always use Virtual Servers of some kind or another these days. They have their shortcomings, but the fact that they're supported by someone else does allow me a lot more sleep than I otherwise would get. In addition, they're easily upgradeable, allowing for cost savings at startup, and ramping up as the site becomes more popular is childsplay - at worst a reboot away - as long as you've chosen a provider with plenty of space to expand. Initially, the choice of server is always a guess. If you're developing a site, you can finetune as you go along, if it's a transfer, then you can look at the performance of the current one. Over time you get a feel for a good starting point ( eg Magento, expected traffic * number of products * number of storefronts * number of languages is a good indicator ), which does help a bit.

As I said, if it's a VM with plenty of growth potential, don't sweat it... the cost of renting the platform is almost certainly trivial compared to the overall release costs.

Cache everything

Here's where it gets really tricky. On your server, the worst performing resource are the disks. This is even truer on a VM, where they're usually remote, and sharing the same network as everyone else. So do everything you can to just not touch them unless you absolutely have to. For reasons that will become more obvious later, this section mainly focuses on the generation of the dynamic part of the web page - the html skeleton that all of the resources ( css, js, images and so on ) plug into.

For the user-facing part of most websites, this is pretty easy. eCommerce site purchases and comments are about it really - maybe 1% of the total traffic - actually needs to make changes to stored info. This means that all other info only needs to be read from disk once, and from that point on can be read from memory ( there are other things on the admin side - supporting logging and audit functionality for example - which do muddy the water a bit, but let's set them aside for now ). As there are a lot of resources required to build that skeleton, I'll treat them separately.

The Database

This is usually MySQL. Most certainly the starting point of a Holy War! Having started using it at MySQL 3 after many years with 'proper' RDBMSes, I agreed... they don't even have views! HOWEVER. That was then, and this is now, and the performance and functionality issues have been addressed, at least to the level that you can easily work around them. I ( currently! ) recommend that you use MySQL v. 5.6, aspecifically the version from Percona. Their InnoDB engine is ACID compliant, fully functional and noticeably faster than the others ( the current Mariadb releases also use that engine, but I find it harder to monitor ). To get it to be able to cache all of those requests whilst generating a page ( Magento can easily generate more than 100 for example ), you need to feed it with copious amounts of memory. How much??

There are a number of simple scripts on the net that can give you a breakdown on how your configuration is working... https://launchpad.net/mysql-tuning-primer/trunk/1.6-r1/+download/tuning-... and https://github.com/major/MySQLTuner-perl/zipball/master spring to mind. These will highlight any shortcomings in your current config. Act on them to provide ( your default! ) innodb engine as much cache as it needs.

PHP

There is an Opcode cache available for all versions of PHP from those of you stuck with legacy 5.3 upwards to 7. This allows you to store bytecode ( 'compiled' ) versions of blocks of code for future use. PHP 5.3 and 5.4 support APC, which I have found to be absolutely rock solid ( and I'm talking literally billions of accesses ) in production use. Later versions can use Zend's opcache, which is built into the language itself. This can often double the speed of your site.

The web server

One that is often forgotten: some webservers can cache the location of resource files, which eases the load on the operating system.

The operating system

Any excess memory on the server will used by the operating system as a 'buffer cache', which acts ( unsurprisingly ) like a buffer between the OS and the physical disks. This will dramatically speed up subsequent reads to common resources by dumping them straight from memory.

Expiry headers

These are added to static resources by the web server, and tell the clients web browser to cache it locally on their device for a specific time, which makes subsequent loading of them almost instant: local not internet speeds

And finally, the application itself

Most applications - Wordpress for example - offer 'Full Page Cache' extensions. These just store the complete html skeleton for a page, and then require almost no effort - no database access, no server side processing and so on - to deliver it to the client.

As you can see, these caches are layered... if there's a FPC version of the page, it'll be delivered, otherwise, it'll build the page up, and use PHP opcache and Mysql Cache resources if they're available. No harm in this at all, for sure!

Use a CDN

I'm using the oldschool definition of a content delivery network, which will just deliver the static resources ( those css, js, jpg files mentioned earlier ) from a local resource. What this does is to take away the responsibility for the 'heavy lifting' of the web page from your server, which just leaves it to do the clever stuff of page generation, order taking, etc. At a rough approximation, less than 100KB of a 1.5MB site is the html skeleton, so you've gained well over an order of magnitude of bandwidth for your server if nothing less. I'm not a fan of these services which take over your DNS, and then just proxy your site for the clever bits - that's going to slow it down, not speed it up!

So the advantages of using a CDN are that they greatly reduce the amount of work that your server has to do: both by finding and delivering resources, but also by easing the strain on your network interface. Whilst this may seem a strange thing to mention when your customers are talking over the extremely slow internet, there may be a lot of them, and you may be artificially restricted by your ISP's plan on what you have available. In addition, if you're using a multi-server platform, then traffic between them also counts... I have a few war stories about that, I can tell you!

Site your server near your intended audience

With http, every file request has an overhead of dead time, the time it takes for your browser to connect to the web server and get a response. This latency is controlled by the laws of physics, so not much can be done about it. As an extreme case, pinging one of my servers in Dublin, Ireland from the paradise I'm happy to call home in Christchurch, New Zealand takes about 0.3 seconds. Whilst your server may request 5 at a time, having 250 resources on a page ( not uncommon unfortunately ) will add significantly to it's performance just by it's location. This is why most of my servers are in Sydney, Australia ( 55ms ) or the West Coast of the USA ( 160ms from here, 140ms from Ireland, and 60ms from Montreal ). Use of a CDN will limit that hit and allow you to centralise your resources, but if you're after every millisecond ( ie you're in eCommerce! ) then take it into account.

Monitor it constantly, reconfigure, rinse and repeat

The thing to remember is that your site is not a static object. Not only are you constantly updating it, but your audience is ( hopefully! ) increasing. It is imperative that you ensure that there is no shortfall in the server resources that you're using to deliver it. Resources like http://munin-monitoring.org/ or http://www.cacti.net/ ( or any of the tools built on RRDTool, authored by https://tobi.oetiker.ch/hp/ ) allow for monitoring of resource use over time, providing you with the information needed to proactively update your server.

As an aside, PLEASE communicate with your administrator if you're going to send out a mailshot, advertise on TV or do anything that will generate a spike in traffic: preparing for this - by upgrading the server for example - is the work of a few minutes, and costs pence, and the alternative of a failed website not only wastes time and money, hurts your credibility, but also sours the internal relationships needed to keep your site profitable.

A note on DNS

When using a CDN to deliver your static content, the provider usually offers a Geographically local endpoint to deliver from. Providers such as Amazon Cloudfront give you a horrible URL that you really want to replace with a nice SEO friendly one from your own domain. If your DNS provider is not GEO-aware, then you will have your code delivered from the one it thinks is closest. Down here in the Antipodes this is really important, as 40ms latency from Sydney is far better than 150ms ( or worse! ) from the West coast of the USA.

So how to put it together?

There are three major web servers out there, apache, nginx and litespeed ( yes, I know, but IIS doesn't run on linux ). The most common is apache, followed by nginx and then litespeed. Apache comes in two current versions: 2.2 and 2.4, the latter being released to attempt to address some of the performance problems of 2.2 ( this is a bit unfair, but is what they published ). nginx is a relative newcomer, and differs from apache ( apart from it's configuration files ) in that is 'event-driven and asynchronous' - ie it reacts to a request, rather than waiting for a thread become available to process it. This makes nginx much more lightweight and less resource hungry than apache ( in addition, it *only* acts as a web server, and hands off server-side processing to external processes, rather than built-in modules ). The advantage of litespeed is that it is a drop in replacement for apache, and is also 'event-driven and asynchronous', although it does seem to cost.

Of these three, I choose nginx, and for two reasons ( here comes the next holy war! ):

- It is very fast

- By splitting the web server and server side processing ( php-fpm ) into separate 'processes', I am able to simply monitor and tune both independently

Underneath that, there is PHP. I use the latest version I can get away with whilst staying conservative - production website delivery is *not* the place for bleeding edge technology if you want a) sleep and b) job security! This usually means PHP 5.6 ( older for old versions of Magento ), and a well configured opcode cache. I do 'eat my own dogfood', and you'll often see my own website running PHP 7 or HHVM for example - just not that of my customers!

Percona MySQL version 5.6, as mentioned earlier. Regularly reconfigured on monitoring results. I do tend to use binary logging ( onto a separate disk! ) for ecommerce sites as the safest backup strategy.

Linux - at the moment I'm using CentOS by default, before that debian squeeze offered the best mix of packages. The only exception is with Amazon EC2, where I use their version of linux on the assumption that they know their hardware better than anyone else. Base configuration is updated to allow for more open files and extra network resources than default ( plus security stuff outside the scope of this document ), just to make sure the OS isn't the performance bottleneck

Finally, these will usually fit on a single server for surprisingly large website ( especially with the CDN installed ), as the PHP processes ultimately need raw CPU power for performance, and the Database needs memory ( yes, it's an oversimplification, but anyone who tells you that server tuning is a science probably hasn't much experience in it! ). As soon as you split it up, you have TCP stacks to build up and tear down, and network resources between resources, and that's always slower than picking it out of local memory.

But what about...?

I expect to be called an idiot for a lot of this - it's a regular occurrence on linkedin for example - but bear in mind I did start managing Unix ( this is a decade before Linux Torvalds invented linux ) a long time ago, and am still in business! I am also old enough to have a decent grounding in RDBMSes, as well as having left the https://en.wikipedia.org/wiki/Dunning%E2%80%93Kruger_effect part of my career well behind. Let's try and head off some of the more obvious observations... I'll try and keep this updated.

- I tried that and it made absolutely no difference

That just means that the bottleneck in your system is elsewhere, for example, swapping apache for lightspeed when the database is crippled by lack of resource. - My developers just told me...

Only the best developers will have any idea of how to deliver secure, scalable, production ready code. They've usually tested that their code functions correctly across the office LAN, with a trivial amount of data. - You need a loadbalancer for that

Why? If the answer is to remove a single point of failure in your infrastructure, then I'll agree to some extent ( and are you, or adding a new SPOF and increasing complexity... KISS remember ). However, the whole point of going to a virtual solution is that it's Someone Else's Problem ( RIP Douglas Adams ), so will it help that much?

If it's for bandwidth, then here's an example. A random provider I use ( linode's $80/mo plan - I'm sure Amazon is bigger, but the info is so hard to find ) offers 1Gb/s of traffic out. The http skeleton of an average web page is 100KB. This is ascii text, so will probably compress by an order of magnitude, so let's say 5 times for this example, so 20KB = 160Kbit. So we can deliver 6.25 pages every second, 22,500/hour or well over half a million pages per day. And if you need more, you can scale up by an order of magnitude if you need without moving with this provider.

Technology coming over the horizon

mod/ngx_pagespeed

This is an attempt to post process the output from your website, to improve it's performance. There are many modules available, for example to resample images, remove whitespace, inline small resources to reduce resource count, sprite images, and so on. Whilst this may be a cost-effective way of doing this, and fairly efficient as it does cache it's results, it's no substitute for decent programming skills in the first place. In addition, there is not yet any decent integration with content management system backends to allow for management of that cached resource, leading to more complex day-to-day maintenance.

http2 and spdy

As Google is now rewarding people for delivering everything via an encrypted connection, it's beneficial to look at performance improvements available with these new extensions to web delivery. What they effectively do is to re-use a connection between server and browser, which makes best use of the available bandwidth, greatly reducing the effect of latency. This makes the use of a large number of resources a lot less of an overhead on a website.

Finally, an example of what can go wrong

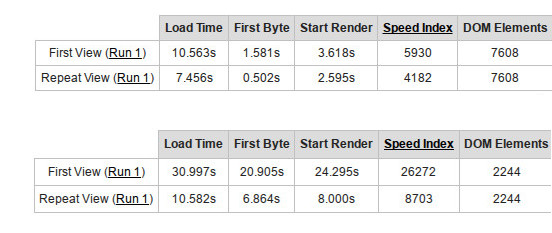

We've been looking after this site for about 5 years. It's a Magento CE site, and to be blunt ( as I'm well known for being... ) it's a bit of a mess, with about 350 separate resources making up this homepage ( and they have this horrible habit of sending massive mailouts without staggering them, which causes a bit of a spike! ). However, it's been running OK until now, and sales have been good. The management felt that they could get a better deal and have migrated the site to a local 'magento specialist'. Here's an excerpt from the webpagetest.org reports before and after the migration. Note the after data was taken at 7am local time... not the busiest time for an ecommerce site: the before one was taken at 3pm.

Although neither are brilliant, I really doubt they'll be getting many sales any more.